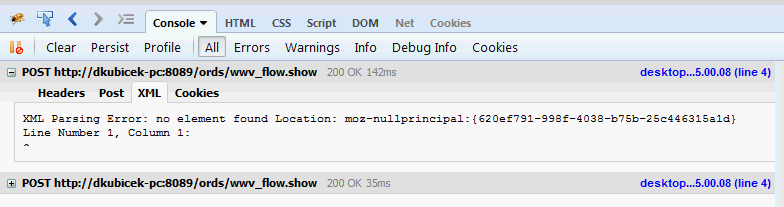

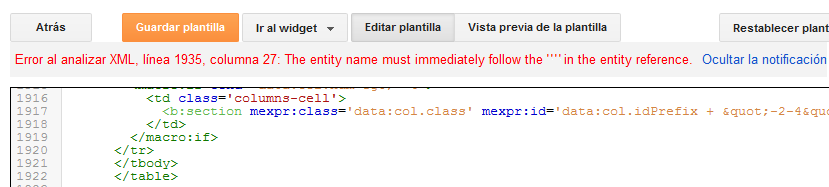

If it's just a matter of the xml incorrectly containing the BOM, then I'll switch to var responseXml = new UTF8Encoding(false).GetString(bytes) īut it was not clear at all from my research that the BOM was illegal in the actual XML string see e.g. New XmlSerializer(typeof(Response)).Serialize(writer, response) If I manually remove the BOM from the front of the string, the response xml parses fine.Īm I missing something obvious, or at least something insidious?ĮDIT: Here is the serialization code I'm using to return the response: private static string SerializeResponse(Response response) In the research I've done over the last hour or so, it appears that XmlReader should honor the BOM. The XMLELEMENT function returns an XML value that is an XML element node. The serialization process adds a UTF-8 BOM to the front of the string, which causes the same code to break when parsing the response. In my request handler I'm serializing a response object and sending it back as a string. It works fine when I read in files and send them over the wire (the request) I've verified that the BOM is not sent across. I am using it to parse strings sent to my WCF service into XML documents, for custom deserialization. Return XElement.Load(XmlReader.Create(new StringReader(xml), readerSettings, readerContext)) Var readerContext = new XmlParserContext(null, null, null, XmlSpace.Default, Encoding.UTF8) In the research I've done over the last hour or so, it appears that XmlReader should honor the BOM. ReaderSettings.ValidationFlags |= XmlSchemaValidationFlags.ReportValidationWarnings The serialization process adds a UTF-8 BOM to the front of the string, which causes the same code to break when parsing the response. ReaderSettings.ValidationFlags |= XmlSchemaValidationFlags.ProcessSchemaLocation But how on earth does the string know / 'preserve' that information about the 3-byte BOM I was expecting that by turning the byte array into a UTF-8 encoded string, any differences would go away and the BOM should no longer be relevant. ReaderSettings.ValidationFlags |= XmlSchemaValidationFlags.ProcessInlineSchema with an error: SystemException: Data at the root level is invalid.

Var readerSettings = new XmlReaderSettings

'A' could be represented by A ), so it isn't necessarily a requirement to avoid data loss.I have the following XML Parsing code in my application: public static XElement Parse(string xml, string xsdFilename) That said, XML allows the representation of any Unicode character via escape entities (e.g. I advocate encoding as Unicode wherever possible (see also the 10 Commandments of Unicode).

If you are strict about this, parsers should be able to interpret your documents correctly. When I try to upload the XML file to an online service, the service says that my file is wrong at line 1 I have discovered that the problem is caused by the BOM on the first bytes of the file. The XMLELEMENT function returns an XML value that is an XQuery element node. Always make sure that the XML declaration ( ) matches the encoding used to write the document. Detection of encoding in XML is relatively straightforward if the encoding is specified in the declaration. When it comes to BOMs and XML, they are optional (see also the Unicode BOM FAQ). ( More on encoding the BOM using Java here.) Since U+FEFF isn't in most encodings, it is not possible for this BOM codepoint to be encoded by them. This can be expressed as a Java char literal using '' (Java char values are implicitly UTF-16). These are the variously encoded forms of the Unicode codepoint U+FEFF. The byte order mark is likely to be one of these byte sequences: UTF-8 BOM: ef bb bf

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed